Introduction

This is the sixth installment in the “From the Archives – Bits & Bobs” series. Volume I is here, Volume II is here, Volume III is here, Volume IV is here, and Volume V is here. But the different volumes have nothing to do with one another.

Yes, it’s another slow news day. So, taking a cue from writers like David Sedaris and Lydia Davis, I’m assembling and posting some excerpts from some old letters. If I too were a famous writer, these micro-dispatches could be gathered into a handsome printed volume sold in bookstores everywhere (i.e., I could basically print my own money). Instead, since I’m an unsung blogger, you can read them for free!

These ones were all written while I was a student at Berkeley.

October 26, 1990

My schedule is so weird, I have to do my cycling indoors sometimes, even when the weather is great. I don’t ride my rollers in the basement laundry room anymore, though. Here’s why. They have this timer knob hooked up to the overhead light, to keep it from being left on. I was riding my rollers in there in the pre-dawn hours before work, and gave no thought to the timer. So I was riding along and suddenly there was this loud “DING!” and the lights went out. I crashed instantaneously. My Walkman went sailing across the room and broke into like three pieces. So now I’m keeping the rollers in the apartment and riding them there. I have this headwind accessory that makes them harder than actual riding. Good complement to all the hills around here.

February 12, 1991

I suddenly find myself in the terrifying position of going head-to-head with the most depraved, cruel band of thugs the free world has ever known. These miscreants have no regard for peace of mind; they loot solace and tranquility like a roadside gas station or liquor store. Meet Mr. First‑and‑Last‑Month Rent: wanted in six states for Financial Burden with Intent to Ruin. Mr. Damage Deposit: known to be armed and dangerous, wanted by federal authorities for Monetary Assault on a College Student. A recent stakeout found that these two are operating with an accomplice: Kid Tuition and his gang, the Three Installments. What could all this mean? Only one thing: I’m laying it all on the line by finding a new apartment. Which means raising a lot of cash in a hurry. Got any quasi-legal, or (what the hell) totally illegal hustles you can bring me in on?

July 16, 1991

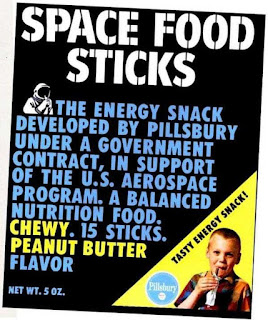

Our local sales tax has been raised to 8.25%, and extended to cover snack foods. This is interesting: candy bars and potato chips are taxed, while fresh produce and wheat flour are not. It gets complicated: all crackers are taxed, except Saltines. Popped popcorn is taxed, but not un-popped. (Do you get a rebate on the un-popped “old maids” in the popped popcorn?) At the bike shop [where I work] these items present some complications. Powerbars, for example, contain 100% of the U.S. R.D.A. of most vitamins and minerals, and have only a gram of fat: hardly typical snack food. Yet, they still don’t really qualify as grocery. So today, in my capacity as shop manager, I put the following notice on the Powerbar display:

DEAR CUSTOMERS:

Recently enacted tax legislation requires us to charge the new tax rate (8.25%) on all snack foods. Since Powerbars and XL 40 bars are “great anytime as a snack” they are now taxable items. But since Exceed High Carbohydrate Source “supplies as much carbohydrate as 6 cups enriched spaghetti, 8 medium sized baked potatoes, or 11 slices of white bread,” it qualifies as a grocery item and is therefore not taxed.

September 19, 1991

Here is the opening passage of the article I was supposed to have read for today for my Honors English seminar:

Perhaps something has occurred in the history of the concept of structure that could be called an “event,” if this loaded word did not entail a meaning which it is precisely the function of structural or structuralist thought to reduce or to suspect.

Keeping in mind that the opening sentence is supposed to lure the reader into further study, I find this beginning atrocious. But amazingly, it gets worse:

It would be easy enough to show that the concept of structure and even the word “structure” itself are as old as the episteme—that is to say, as old as western science and western philosophy—and that their roots thrust deep into the soil of ordinary language, into whose deepest recesses the episteme plunges to gather them together once more, making them part of itself in a metaphorical displacement.

For me to be reading this might suggest that I’m some kind of elite student who can grasp really complex ideas. But the sad truth of the matter is that I am no better equipped to handle this than you are. Probably less equipped, actually, given my youth and naiveté. This essay rages on for twenty-six pages, getting increasingly obtuse, and I never made it past the first five pages, with zero percent comprehension. Thus in one day I have fallen hopelessly behind, since the rest of the reading for this course (one such essay per class day) is doubtless based on this foundational essay.

So I walked into class this morning very ill prepared, and thought to myself, “Self, don’t despair, the rest of the class is probably as overwhelmed as I am.” This day I happened to be early, for—having given up my attempt to read the essay—I had nothing better to do than to make a big breakfast and leave for school well ahead of time. I ask the first fellow student I saw what he thought of the essay. “I really enjoyed it, how about you?” he said, apparently with perfect sincerity. I told him that to be perfectly honest, I had found it somewhat difficult. He frowned, as if surprised to hear that, and said, “I found it similar to Beckett, didn’t you?” I vaguely remember a Sir Thomas Becket who wrote some religious thing, maybe about people flogging themselves or something, but couldn’t make a connection. [I realized weeks after writing this my classmate meant Samuel Beckett.]

I spent the class period realizing that for perhaps the first time, I’m hopelessly outclassed by my peers. I start to fret and sweat, it gets hot, I can’t get away, the prof rolls, I’m popped. How’d I get into this spot? I played myself. But why am I facing off against this fierce foe? The faculty’s fault? No. Nobody signed me up for the course; nobody enrolled me by force. I thought I that I could excel at this big school? Fool. I played myself. [Apologies to Ice-T.]

February 27, 1992

I was studying yesterday in Sproul plaza, sitting on a bench, and an old man came and sat down by me, with nothing to occupy him but people watching. Eventually we had some conversation: he’s 85 years old (didn’t look it!) and from Russia. He didn’t seem to recognize Lolita, which I was reading, and was surprised at my choice of the English major, having studied engineering himself. He says in Russia, those with a college degree are admitted to a special grocery store that those without a degree are not. Same prices in the alumni-only store, but much vaster variety and availability of goods. He didn’t say how the store vets these people, and I didn’t ask.

During the big rains we had a week or so ago, I parked my bike under the bridge between LeConte Hall and Birge Hall, just east of Sather Tower, before going into Doe Library (through the back entrance, as a construction project has blocked the front and snarled most paths through campus). It wasn’t raining that afternoon but my precaution was well taken: when I finally left the library in the late evening, the rain had started up again. Groggy from my scholastic haze, I was shocked to find that nature and weather and the out-of-doors had all continued without me. Riding down Bancroft, I was impressed by the electrifying effect the rain had: extra-shiny reflections on the cars, and extra-black asphalt with a neon-like red reflections of taillights.

In five minutes I’ll leave go to tutor at Malcolm X school. My student, “Eddie,” is trying to imitate my out-loud reading by going as fast as he can. This means the words come out at the same pace as before, but with a tone of frantic urgency and almost no pause between words. I sometimes worry that he isn’t retaining what he has read, but last week we worked together on a book report and I found he’s getting most of it. His dopey teacher had acted like he’s a hopeless case, totally checked out, etc. but I find he works pretty hard if someone’s around to help him focus.

May 25, 1992

Doesn’t it piss you off when you get a defective band-aid? At the bike shop we have Curad. As you know, these are not the band-aids we grew up with, and I find myself somewhat hostile to them. Their lack of quality control is one of the small but nagging travesties that plague my employment at the shop. I would say about one in three Curad band-aids is defective. The little sanitary cotton pad is skewed, and sometimes has missed the center of the adhesive portion, so you get just a little triangle of pad, and the rest is free to stick to the wound. I really can’t believe the bandaging public tolerates Curads at all. At home I use Johnson & Johnson Band-Aid brand. This becomes expensive since I’m constantly washing dishes or taking my contact lenses out or something. I’m tempted to just leave the wet band-aids on and let them dry out, but I called Johnson & Johnson on their toll free number and asked about this. “You know how the box says they’re waterproof?” I asked. “Oh, yes, they certainly are,” said the lady earnestly, in her charming southern accent. “Well, does that mean I don’t need to change them once they’re wet?” I asked. “Oh, no, you should always replace them—that’s the only way to prevent bacteria from infecting the wound,” she replied. Puzzled, I asked, “Then what’s the benefit of their being waterproof?” A long pause. Finally she said, “Well, if you’re swimming you won’t lose them in the pool. And, they won’t go down the drain.”

September 12, 1992

I have purchased Lila - An Inquiry into Morals by Robert Pirsig, author of the classic Zen & the Art of Motorcycle Maintenance. I hope this second book is good, but I have little faith that it’ll live up to its predecessor. I really loved the Motorcycle book, but the really lame Epilogue, which Pirsig attached to it like a decade later, irritated me so much that I tore it out of the book. This act was, I’ll confess, tantamount to destroying knowledge and human thought itself. Even worse, it loosened the cheap glue binding on the whole book, so that eventually all the pages started falling out—a disaster, especially since this is the one book I really wanted to pass around among my friends. It’s a really shoddy paperback to begin with, from the imprint “BANTAM NEW AGE BOOKS: A Search for Meaning, Growth, and Change.” Other Bantam New Age editions include Ecotopia, Shambhala: The Sacred Path of the Warrior, and The Complete Crystal Guidebook. What bothers me isn’t so much the nature of these books by themselves—although that alone is annoying—but rather the very idea that Bantam could integrate so many conflicting and discrete books into a single group, as if they could all be united as life philosophies. Like somebody is going to just go right on down the list, finding Meaning, Growth, and Change in every one of these. It’s tempting to think that when Pirsig wrote Zen and the Art of Motorcycle Maintenance, he would have winced at having his work co-opted into the Bantam New Age menagerie. But actually, the epilogue that he added later totally smacked of the easy, wildly speculative, faux-spiritual sentimentalism that the “NEW AGE BOOKS” line can be presumed to have. Who knows, maybe Pirsig got cocky; after all, his book sold like crazy and impressed a lot of people, many for perhaps the wrong reasons. Anyway, I still have the decrepit Motorcycle paperback, and without the Epilogue it is a work of pure genius. I’ll let you know how Lila ends up going.

—~—~—~—~—~—~—~—~—

Email me here.

For a complete index of albertnet posts, click here.